Model 2 - Hand Landmark Detection

Overview

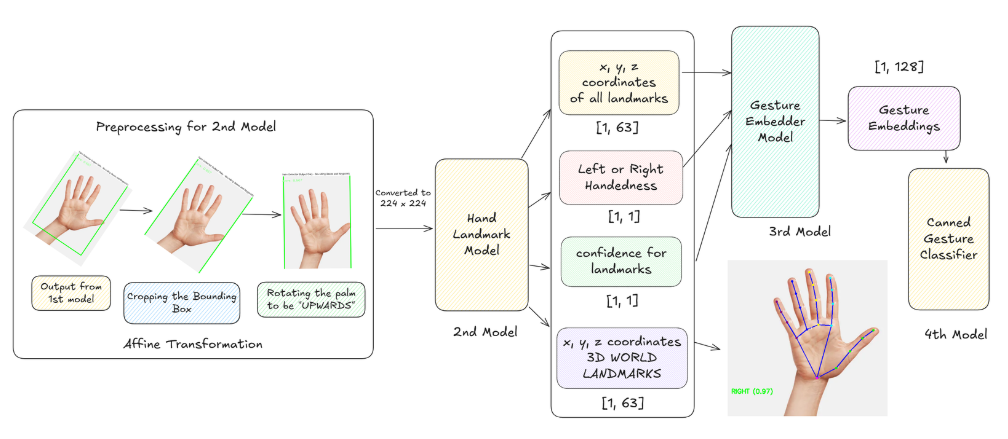

Once a hand is located by Model 1, the Hand Landmark Detection model is used to find its detailed structure. This is the most computationally intensive model in the pipeline, responsible for predicting the precise 3D coordinates of 21 key hand landmarks from a cropped image of a single hand.

Model Specifications

- Model File:

hand_landmarks_detector.xml - Architecture: Custom CNN with attention mechanisms optimized for landmark detection

- Purpose: Predict precise 3D coordinates of 21 key hand landmarks from cropped hand regions

- Computational Intensity: Most resource-intensive model in the pipeline

Figure: Function diagram for Model 2 processing pipeline.

Figure: Function diagram for Model 2 processing pipeline.

Input/Output Specifications

Input Requirements

- Format:

[1, 224, 224, 3]- A cropped and warped image of a single hand - Resolution: 224x224 pixels (standardized input size)

- Source: Generated using the rect_points from Model 1 (Hand Detector)

- Preprocessing: Affine transformation and image warping from original frame

Output Format

The model produces four distinct outputs providing comprehensive hand analysis:

-

Identity:

[1, 63]- The 21 landmarks as pixel coordinates

[x, y, z]relative to the 224x224 input crop - 63 values total (21 landmarks × 3 coordinates each)

- The 21 landmarks as pixel coordinates

-

Identity_1:

[1, 1]- Confidence score indicating the presence of a hand in the crop

- Single float value representing hand presence probability

-

Identity_2:

[1, 1]- Confidence score for handedness classification (left vs. right hand)

- Single float value for left/right hand determination

-

Identity_3:

[1, 63]- World landmarks providing 3D coordinates in meters

- Real-world spatial coordinates for depth-aware applications

21-Point Hand Landmark Model

Landmark Structure

The model detects landmarks following the MediaPipe hand model specification:

Wrist (1 point)

- Point 0: Wrist center - base reference point

Thumb (4 points)

- Point 1: CMC joint (base) - thumb base connection

- Point 2: MCP joint - thumb metacarpal joint

- Point 3: IP joint - thumb interphalangeal joint

- Point 4: Thumb tip - thumb fingertip

Index Finger (4 points)

- Point 5: MCP joint (base) - index finger base

- Point 6: PIP joint - proximal interphalangeal joint

- Point 7: DIP joint - distal interphalangeal joint

- Point 8: Index tip - index fingertip

Middle Finger (4 points)

- Point 9: MCP joint (base) - middle finger base

- Point 10: PIP joint - proximal interphalangeal joint

- Point 11: DIP joint - distal interphalangeal joint

- Point 12: Middle tip - middle fingertip

Ring Finger (4 points)

- Point 13: MCP joint (base) - ring finger base

- Point 14: PIP joint - proximal interphalangeal joint

- Point 15: DIP joint - distal interphalangeal joint

- Point 16: Ring tip - ring fingertip

Pinky (4 points)

- Point 17: MCP joint (base) - pinky base

- Point 18: PIP joint - proximal interphalangeal joint

- Point 19: DIP joint - distal interphalangeal joint

- Point 20: Pinky tip - pinky fingertip

Processing Pipeline

The Hand Landmark Detection model operates through a sophisticated pipeline that transforms detected hand regions into detailed landmark coordinates:

- Region Rectification and Cropping (Function 1): Image warping and hand region extraction

- Landmark Detection and Smoothing (Function 2): 21-point detection with temporal stabilization

Technical Implementation

Key Functions & Logic

1. Image Warping

- Function:

warp_rect_imgin hand_landmark.py - Process: Performs affine transformation on original full-resolution camera frame

- Result: "Cuts out" the hand and straightens it, creating 224x224 input

2. Model Inference

- Function:

_process_landmarks_and_gesturesin gesture_engine.py - Process: Warped hand image fed into

hand_landmarks_detectormodel - Output: Raw landmark coordinates and confidence scores

3. Post-processing

- Function:

lm_postprocessin hand_landmark.py - Process: Normalizes pixel landmarks to [0, 1] range

- Features: Extracts handedness and presence scores

- Smoothing: Applies Exponential Moving Average (EMA) for temporal stability

Coordinate Systems

Pixel Coordinates (Identity Output)

- X-axis: Left to right within 224x224 crop (0 to 224)

- Y-axis: Top to bottom within 224x224 crop (0 to 224)

- Z-axis: Depth relative to wrist (negative values closer)

- Normalization: Converted to [0, 1] range during post-processing

World Coordinates (Identity_3 Output)

- Units: Real-world coordinates in meters

- Reference: 3D spatial positioning relative to camera

- Applications: Depth-aware gesture recognition and AR/VR integration

- Precision: Millimeter-level accuracy for close-range detection

Model Architecture Details

Network Design

- Input Layer: 224x224x3 RGB image tensor

- Feature Extraction: Multi-scale convolutional layers

- Attention Mechanisms: Focus on hand regions and joint locations

- Regression Heads: Separate heads for landmarks, presence, and handedness

- Output Processing: Multi-task learning with shared feature extraction

Key Features

- Sub-pixel Accuracy: Precise landmark localization with sub-pixel precision

- Rotation Invariance: Handles various hand orientations through input warping

- Scale Normalization: Consistent output regardless of input hand size

- Multi-task Learning: Simultaneous landmark detection and hand classification

Temporal Stabilization

Exponential Moving Average (EMA) Smoothing

- Purpose: Reduces jitter and maintains smooth landmark trajectories

- Implementation: Applied when matching regions found in previous frames

- IoU Matching: Uses Intersection over Union to match hands across frames

- Adaptive Smoothing: Smoothing strength adapts based on motion speed

Frame-to-Frame Consistency

- Tracking: Maintains landmark identity across video frames

- Interpolation: Handles temporary occlusions and detection gaps

- Quality Control: Validates landmark consistency and anatomical constraints

Integration with Pipeline

Input Interface

- Hand Regions: Receives rect_points from Model 1 (Hand Detector)

- Original Frame: Accesses full-resolution camera frame for warping

- Configuration: Processing parameters and quality thresholds

Output Interface

- Landmark Data: Provides 21-point hand structure to Model 3

- Quality Metrics: Hand presence and confidence scores

- Handedness: Left/right hand classification

- World Coordinates: 3D spatial data for advanced applications

Configuration Parameters

Detection Settings

landmark_confidence: Minimum confidence threshold for landmarks (default: 0.5)presence_threshold: Hand presence detection threshold (default: 0.7)handedness_threshold: Left/right classification threshold (default: 0.5)

Smoothing Parameters

temporal_smoothing: EMA smoothing factor (default: 0.3)iou_threshold: Frame matching threshold (default: 0.3)max_smoothing_frames: Maximum frames for smoothing history (default: 5)

Processing Options

world_coordinates: Enable world coordinate output (default: true)anatomical_validation: Enable pose validation (default: true)batch_processing: Process multiple hands simultaneously (default: false)

Next Steps

With detailed hand landmarks established, the pipeline proceeds to:

- Model 3 (Gesture Embedder): Generate compact feature representations

- Gesture Classification: Identify specific hand poses and movements

- Temporal Analysis: Track dynamic gesture patterns over time

- Real-time Applications: Enable immediate gesture-based interactions