Introduction

Welcome to the Gesture Control with OpenVINO documentation. This comprehensive guide covers the implementation of gesture control systems using Intel's OpenVINO toolkit.

Overview

This documentation is organized into several key sections:

- Model Architecture: Learn about the four main models that power the gesture recognition system

- Gesture Types: Understand static and dynamic gesture recognition

- Control System: Explore the GUI and input mapping components

- Performance: Analyze metrics and optimization on Intel devices

Getting Started

To get started with gesture control, we recommend reading through the model architecture sections first, then exploring the gesture control system implementation.

Prerequisites

- Intel OpenVINO toolkit

- Python 3.7+

- Camera device for gesture capture

- Intel hardware (recommended for optimal performance)

Let's begin your journey into gesture control technology!

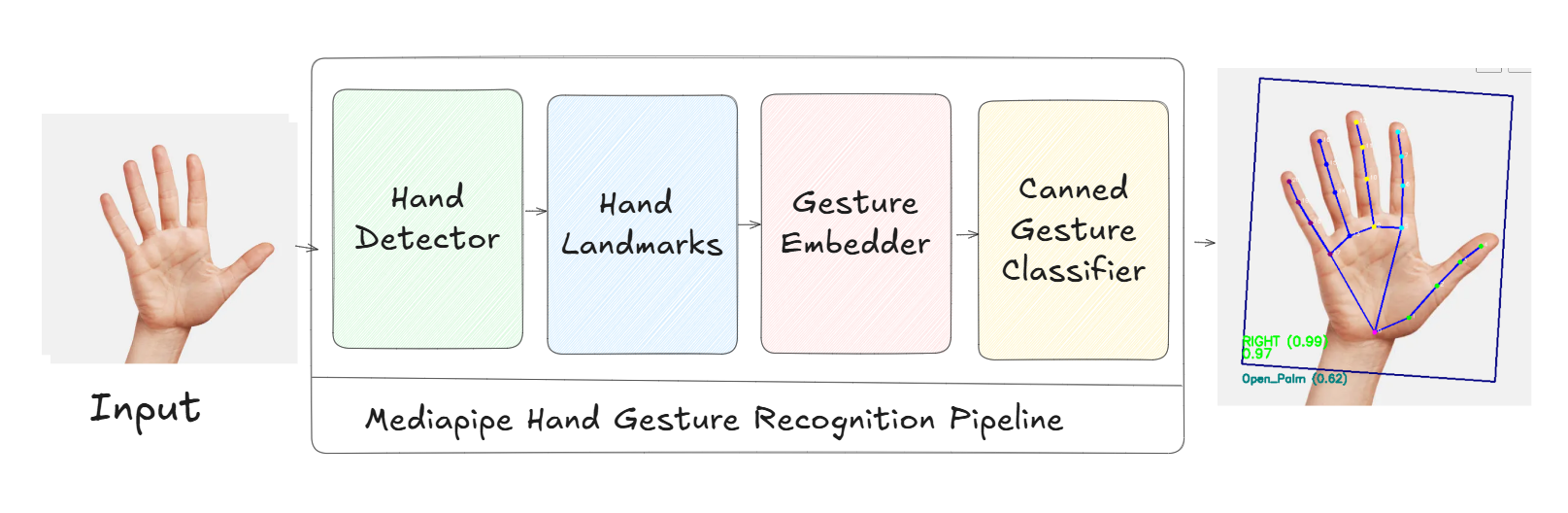

Gesture Recognition Pipeline at a Glance

This pipeline processes the camera input through four stages — Hand Detector, Hand Landmarks, Gesture Embedder, and Canned Gesture Classifier — to produce robust gesture outputs used by the control system.

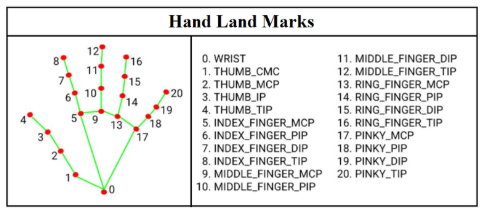

Hand Landmarks (21 points)

The landmark model predicts 21 key points per hand. These landmarks drive gesture embedding/classification and custom dynamic gesture logic used by application modes.