Model 1 - Hand Detector

Overview

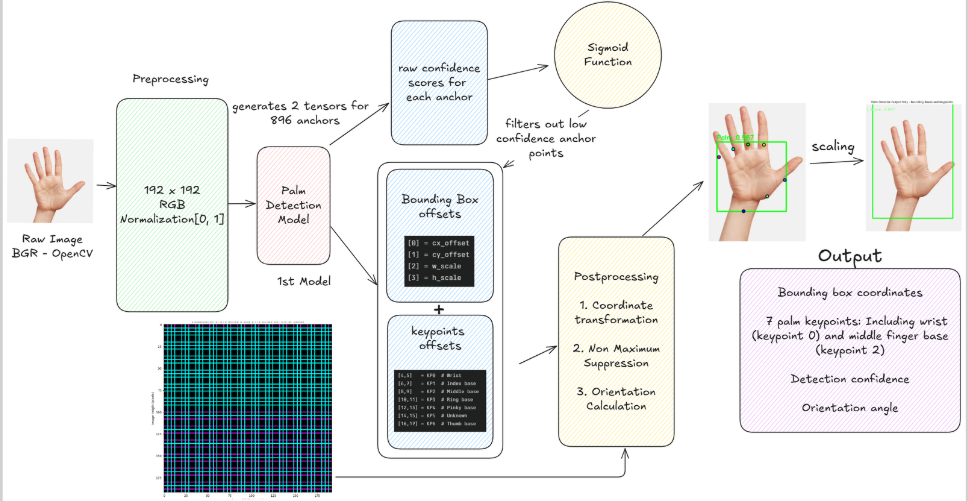

The Hand Detector is the first and foundational model in our gesture recognition pipeline. This model is a fast, lightweight Single Shot Detector (SSD) that generates bounding boxes around any hands in the image, allowing the system to avoid processing the entire image with the more complex landmark model.

Model Specifications

- Model File:

hand_detector.xml - Architecture: Single Shot Detector (SSD) based on MobileNet

- Purpose: Generate bounding boxes around detected hands in the camera frame

- Processing Strategy: Fast detection to enable efficient downstream processing

Input/Output Specifications

Input Requirements

- Format:

[1, 192, 192, 3]- The full camera frame, resized to a small square - Resolution: 192x192 pixels (standardized input size)

- Color Space: RGB format

- Preprocessing: Frame resizing and normalization

Output Format

The model produces two primary outputs:

-

Identity:

[1, 2016, 18]- Raw bounding box and keypoint data for 2016 potential anchor boxes

- Contains encoded detection information for post-processing

-

Identity_1:

[1, 2016, 1]- Confidence scores for each of the 2016 anchor boxes

- Used for filtering and ranking detections

Architecture Details

SSD Framework

- Anchor-based Detection: Uses pre-generated anchor boxes at multiple scales

- Multi-scale Detection: Detects hands at various sizes within the frame

- Lightweight Design: Optimized for real-time performance

- Feature Extraction: Efficient feature extraction for hand detection

Detection Pipeline

The Hand Detector model operates through a structured pipeline that processes camera frames and outputs clean hand detection results. The pipeline consists of four main stages:

- Inference Stage: Raw model prediction

- Decoding Stage: Converting raw outputs to interpretable data

- Filtering Stage: Removing redundant and low-confidence detections

- Rotation Calculation: Preparing regions for landmark detection

Figure: Visualization of the Hand Detector processing stages.

Figure: Visualization of the Hand Detector processing stages.

Integration with Pipeline

The Hand Detector integrates seamlessly with the gesture recognition pipeline:

- Input Processing: Receives camera frames from video capture

- Detection: Identifies hand regions with confidence scores

- Output: Provides clean hand regions to Model 2 (Hand Landmarks)

- Efficiency: Enables selective processing of relevant image areas

Technical Implementation

Anchor Generation

The model uses a sophisticated anchor generation system based on SSD principles:

- Multiple scales and aspect ratios for comprehensive coverage

- Strategic placement across the feature map

- MediaPipe-compatible anchor configuration

- Optimized for hand detection scenarios

Post-Processing Pipeline

- Bounding Box Decoding: Converts raw outputs to coordinate space

- Score Processing: Applies sigmoid activation and threshold filtering

- Non-Maximum Suppression: Eliminates redundant detections

- Coordinate Transformation: Prepares data for downstream models

Configuration Options

Detection Parameters

confidence_threshold: Minimum confidence for valid detection (default: 0.7)nms_threshold: Non-maximum suppression threshold (default: 0.5)max_hands: Maximum number of hands to detect (default: 2)input_size: Input image resolution (default: 192x192)

Next Steps

After successful hand detection, the pipeline proceeds to:

- Model 2 (Hand Landmarks): Extract detailed 21-point hand landmarks

- Quality Assessment: Validate detection quality and stability

- Temporal Tracking: Maintain hand identity across frames

- Region Optimization: Prepare optimal regions for landmark extraction